Fundamentals of AI: How do we teach machines to act like humans?

We have been talking about artificial intelligence for a long time. Continuing its rapid development, this area of computer science not only continues to stay relevant but also promises us more and more opportunities very shortly. In this article, we will provide some understanding of the basics of AI and what technologies lie behind it.

What is Artificial Intelligence?

In the simplest terms, Artificial Intelligence is a field of Computer Science working on creating machines that are capable of performing tasks that typically require human intelligence. Computer systems that can mimic human behavior, such as the ability to reason, discover meaning, generalize, or learn from experience are a few examples.

AI-driven systems process mountains of data, classify, analyze, and learn from it, improving themselves over time.

Different approaches to Artificial Intelligence

So how do we teach machines to think like we do? Or even teach machines to teach themselves to behave like humans? During the existence of AI, the sphere has gone through several approaches. Let’s take a look at them.

Symbolic AI

Symbolic AI also known as Classical AI or “GOFAI” (Good Old Fashioned AI) was the dominant paradigm from 1955 to 1990.

As the name implies, symbolic AI was built around the idea of symbols and rules. The adherents of this approach believed that almost any aspect of human intelligence can be described – brought to a symbol – in such a way that the machine can simulate it. Scientists developed tools to define and manipulate those symbols, based on creating explicit structures and behavior rules.

But the approach started breaking down facing the complexity of the real world and the amounts of messy data in it. You just can’t define rules for every occurring case (even if we talk about detecting a dog on an image). Furthermore, some tasks just can’t be transformed into rules, like Speech recognition or Natural Language Processing.

Nowadays Symbolic AI has given way to more scalable and perspective Machine Learning and Deep Learning. Still, some leading scientists believe that symbolic reasoning will continue to remain a very important component of artificial intelligence.

Machine learning

ML is a branch of artificial intelligence based on the idea that machines can learn from data, understand patterns, and make decisions with minimal human intervention. It was born from pattern recognition and the theory that computers can learn without being programmed to perform specific tasks. In simple terms it allows machines to learn like humans do.

Classical machine learning is often categorized by how an algorithm learns to become more accurate in its predictions. There are four basic types of machine learning.

- Supervised learning: algorithms are trained using labeled examples, such as an input where the desired output is known. The learning algorithm receives a set of inputs along with the corresponding correct outputs, and the algorithm learns by comparing its actual output with correct outputs to find errors. It then modifies the model accordingly. Supervised learning is commonly used in applications where historical data predicts likely future events.

- Unsupervised learning: This type of machine learning involves algorithms that train on unlabeled data. The algorithm scans through datasets looking for any meaningful connection. The system is not told the “right answer.” The algorithm must figure out what is being shown. The goal is to find some structure within. Unsupervised learning works well with such tasks as identifying segments of customers with similar attributes who can then be treated similarly in marketing campaigns. Or it can find the main attributes that separate customer segments from each other.

- Semi-supervised learning: This approach to machine learning involves a mix of the two preceding types. It uses both labeled and unlabeled data for training – typically a small amount of labeled data with a large amount of unlabeled data (because unlabeled data is less expensive and takes less effort to acquire). This type of learning can be used with methods such as classification, regression, and prediction.

- Reinforcement learning: this is a behavioral machine learning model that is similar to supervised learning, but the algorithm isn’t trained using sample data. This model learns as it goes by using trial and error. A sequence of successful outcomes will be reinforced to develop the best recommendation or policy for a given problem.

What is Deep Learning?

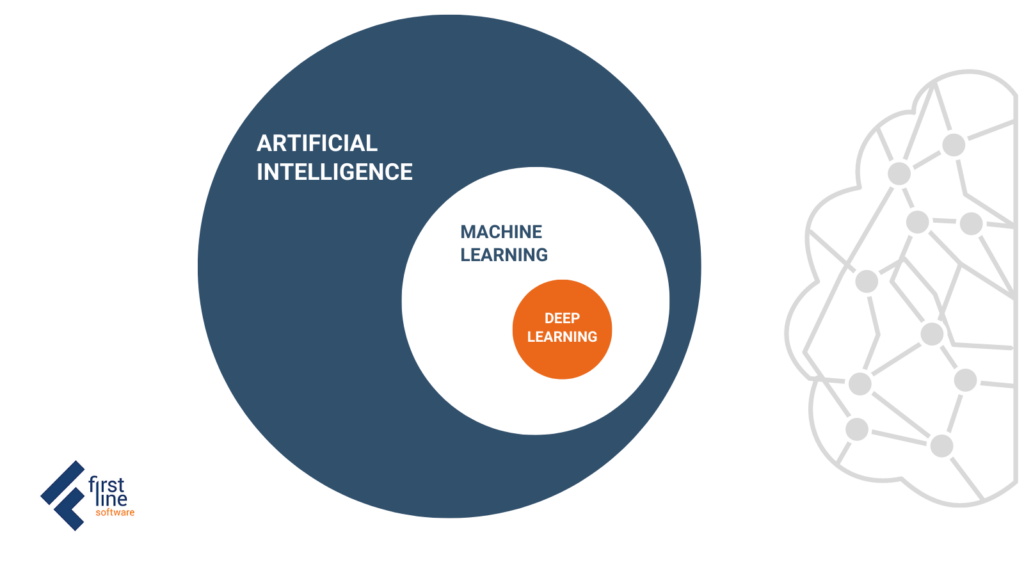

The concepts of deep learning and machine learning are often taken interchangeably. But it is not quite right. While machine learning is a subset of artificial intelligence, deep learning is a subset of machine learning.

Classical machine learning algorithms can include such relatively simple approaches as linear regression or decision trees. While deep learning is much more mathematically complex and sophisticated, algorithms are designed and inspired by the biological neural network of the human brain.

Deep learning algorithms can be considered as the evolution of machine learning algorithms. They analyze data with a logical structure similar to how a human would conclude, using a layered structure of algorithms called an artificial neural network (ANN).

Artificial Neural Networks (ANN)

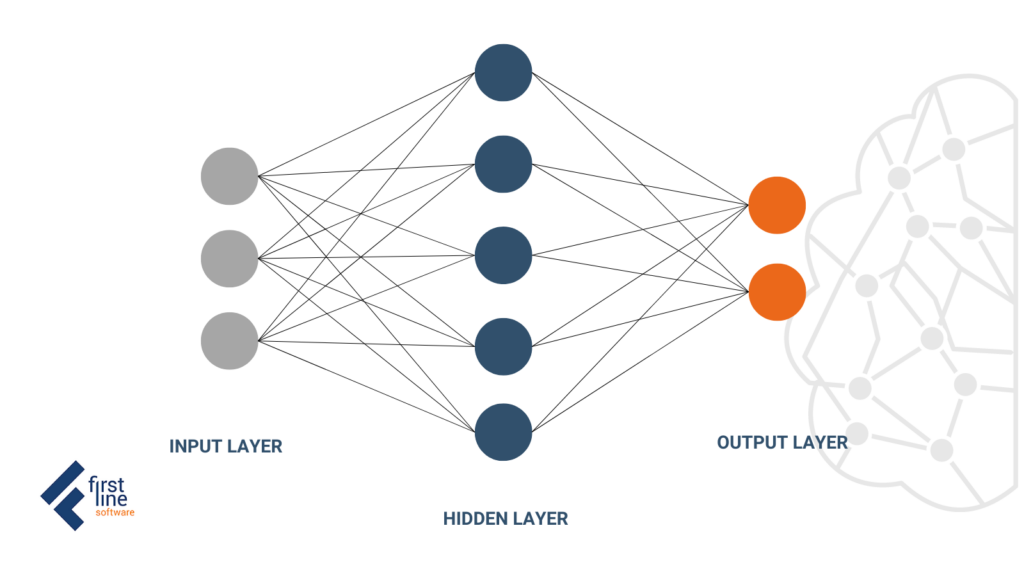

The simplest example of ANN consists of 3 layers. The first one is Input, which is where the data is accepted, and the last one is Output – which is where we get the results. The “magic” happens in the middle layers called Hidden layers. This is where the computational process takes place. The more hidden layers a network has between the input and output layer, the deeper it is.

The layers are connected via nodes, and these connections form a “network” – the neural network – of interconnected nodes. Similar in behavior to neurons in the human brain, nodes are activated when there are sufficient stimuli or input. This activation spreads throughout the network, creating a response to the stimuli (output).

In simple terms, they process all the incoming data and find out where there are the strongest relationships. In the simplest networks, the received inputs are summed up, and if the sum is greater than a certain threshold value, the neuron “fires” and activates the neurons to which it is connected to.

Types of Neural Networks

There are different types of deep neural networks from simpler to more complex. Each type has its advantages and disadvantages, and the tasks with which it copes can be better or worse depending on the situation.

Feedforward neural networks – are the type we’ve talked about below. Each perceptron (an artificial neuron) of one layer is connected to each on the other layer. Information goes from one layer to another only in the forward direction.

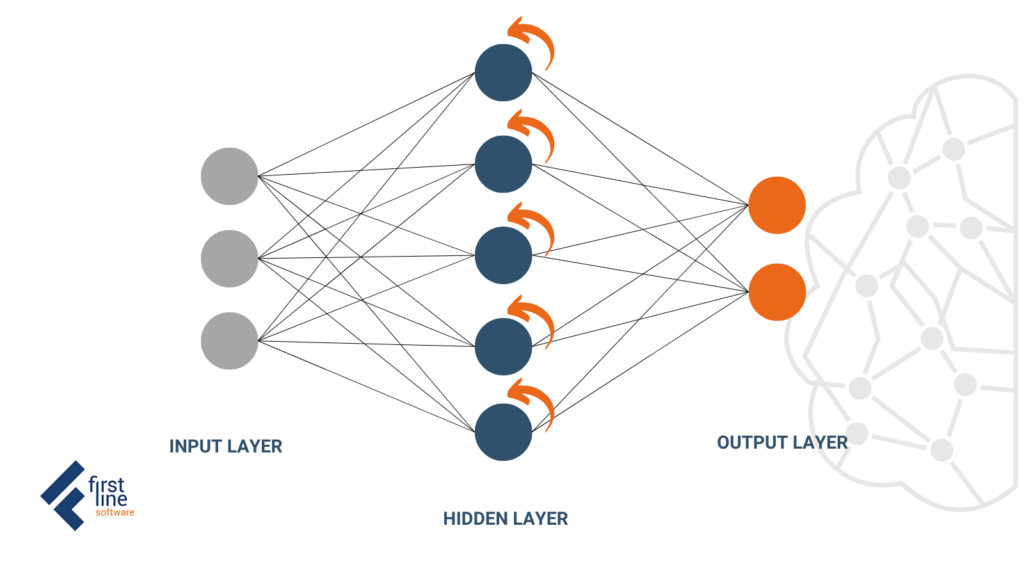

Recurrent neural networks – these neural networks use information in sequence – such as timestamped data from a sensory device or a spoken sentence. Unlike traditional neural networks, all inputs of a recurrent neural network are not independent of each other, and the output for each element depends on the calculations of its previous elements. RNNs are used for prediction, sentiment analysis, and other text-based applications.

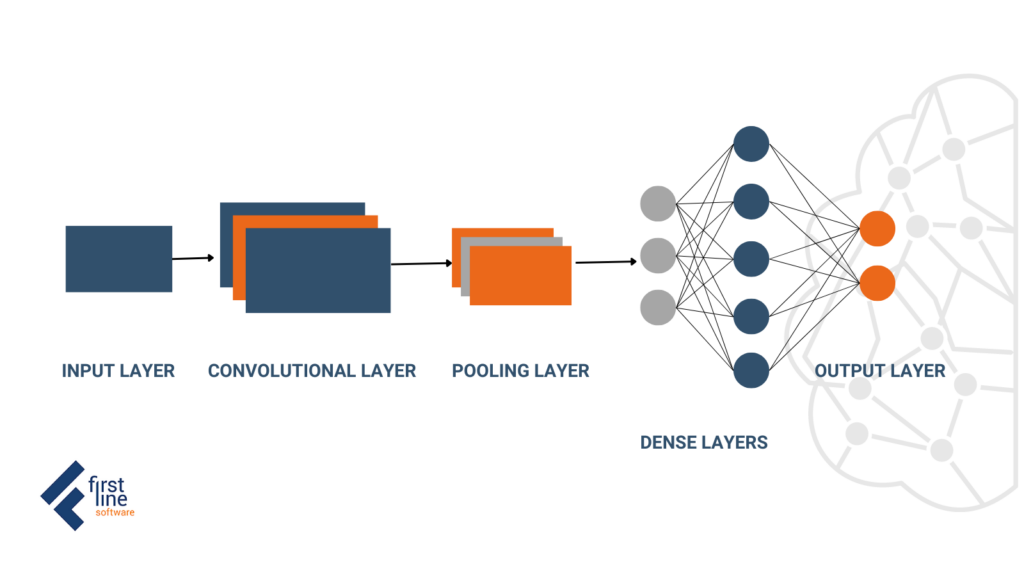

Convolutional Neural Networks – The building blocks of convolutional neural networks are filters or kernels. Kernels are used to extract the relevant features from the input using the convolution operation. Therefore CNNs contain five types of layers: input, convolution, pooling, fully connected, and output. Each layer has a specific purpose, like summarizing, connecting, or activating. These types of networks are actively used for image classification and object detection. However, CNNs have also been applied to other areas, such as natural language processing and forecasting.

What’s next?

The scope of artificial intelligence extends from our daily lives with recommendation services, smart social media feeds, and built routes in maps, to futuristic concepts, humanoid robots, and discussions about its dangers. What we can’t deny is that AI is already giving us incredible opportunities in almost every area of human life.

If you want to know more about how AI&ML can be leveraged to increase your business efficiency with decision support, forecasting, or any other application – the First Line Software experts team is always ready to help. Talk to us today!